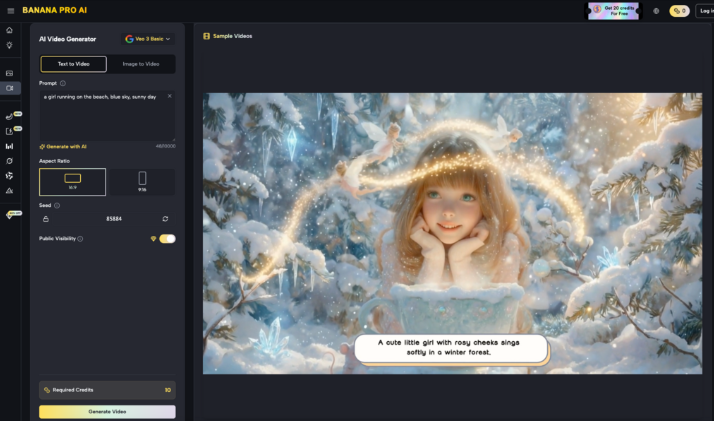

The shift from experimental AI usage to standardized creative operations requires a transition from “prompting for fun” to “routing for efficiency.” In a professional content team, the question is no longer whether AI can generate an image, but which specific model should handle a particular stage of the production pipeline. This concept of model routing—matching the specific strengths of a latent diffusion model or a video transformer to the immediate task—is where the real margin is found.

When working within an ecosystem like Banana AI, operators are presented with a suite of engines: Banana 2 AI, Midjourney, Seedance 2.0, and the high-efficiency Nano Banana Pro. Each carries a different weight in terms of prompt adherence, aesthetic bias, and inference speed. For a creative lead, the goal is to build a repeatable asset pipeline that doesn’t over-rely on a single “jack-of-all-trades” model, which often leads to higher costs or slower turnarounds.

Stage 1: Low-Fidelity Prototyping and Layout

The earliest stage of any visual project is the “greyboxing” phase. Here, the operator isn’t looking for a final, high-resolution render; they are looking for composition, color palette, and general subject placement. Using a heavy, high-vram-usage model for this stage is a waste of resources.

Nano Banana Pro excels in this initial environment. Its architecture is optimized for speed and directional accuracy. When an operator is testing fifty different layout variations for a social media campaign, the lower latency of Nano Banana allows for a tighter feedback loop. At this stage, the team is evaluating the “vibe” rather than the texture of a fabric or the specific anatomy of a hand.

It is important to note a limitation here: early-stage prototyping often suffers from what we call “compositional drift.” Even with precise prompting, low-latency models can struggle with complex spatial relationships—for example, trying to place a very specific object “behind the glass but in front of the curtains.” In these cases, operators must accept that the prototype is a directional guide, not a blueprint.

Stage 2: Core Asset Generation and The Aesthetic Choice

Once the direction is locked, the workflow moves into the generation of the “hero” asset. This is where model routing becomes highly subjective and depends on the final medium. If the project requires a hyper-stylized, “cinematic” look that leans into the classic AI aesthetic, Midjourney or Banana 2 AI are the traditional choices.

However, for assets that need to maintain a degree of grounded realism or serve as a base for further manipulation, Nano Banana offers a cleaner canvas. The model tends to be less “opinionated” than its larger counterparts. While a model like Seedream 5.0 might inject a heavy amount of post-processing or lighting effects by default, Nano Banana Pro provides a flatter, more workable image that is easier to grade in post-production.

For a creative operations lead, this is a decision between “Model-as-Artist” and “Model-as-Tool.” If you want the AI to do the creative heavy lifting, you route to the high-parameter models. If you want the AI to provide a high-quality raw asset that your human designers will then take over, the Nano Banana series is often the more pragmatic choice.

Stage 3: Refinement Through the Canvas Workflow

Generation is rarely the final step. Professional workflows almost always require a dedicated AI Image Editor to fix artifacts or adjust specific regions of an image. This is the stage where the “Canvas Workflow” in the Banana Pro ecosystem becomes the primary interface.

The transition from a text-to-image prompt to an image-to-image or in-painting task is a critical pivot point. Here, the operator uses the existing asset as a “structural reference.” The goal might be to swap a product out of a model’s hand or change the background of a lifestyle shot without altering the subject’s face.

The Banana Pro toolset allows for this surgical precision. Instead of re-rolling the entire prompt and hoping for a similar result—a process that is notoriously unreliable in generative AI—the operator isolates the problem area. However, there is a technical uncertainty here that teams must account for: “seam visibility.” Even with advanced masking, there is occasionally a shift in noise grain or lighting temperature between the generated patch and the original image. This requires a final pass of manual color correction or a global “soft upscale” to unify the textures.

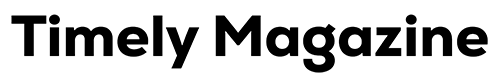

Stage 4: Temporal Expansion and Video Integration

For teams moving into motion, the workflow complexity increases exponentially. Routing a static image into a video engine like Seedance 2.0 requires a clear understanding of temporal consistency.

A common pitfall in creative ops is trying to generate a video from a prompt that is too complex. A more successful route is the “Image-to-Video” pipeline. By using a high-quality frame generated by Banana Pro as the “Seed Image,” the video model has a concrete visual anchor. This significantly reduces the chances of the subject morphing into something else halfway through the clip.

When using Seedance 2.0 for professional delivery, operators should expect a “temporal decay” limitation. Current generative video tech still struggles to maintain perfect structural integrity over clips longer than a few seconds. For a 30-second ad, the workflow shouldn’t be a single 30-second generation. Instead, it should be a series of 2-3 second “shots” generated using the same Nano Banana Pro-derived style guide, then stitched together in a traditional non-linear editor. This “modular” approach is the only way to ensure brand consistency across a video project.

The Decision Matrix: Speed, Fidelity, and Cost

Managing a content team means balancing the “Iron Triangle” of production. Nano Banana Pro is specifically positioned for the “Speed” and “Cost” legs of that triangle, without sacrificing enough “Fidelity” to make the assets unusable.

- High Volume / Low Stakes: (e.g., daily social posts, internal mood boards). Route entirely to Nano Banana. The cost-per-image and time-to-delivery are the primary KPIs.

- Low Volume / High Stakes: (e.g., website hero images, print ads). Use Midjourney or Banana 2 AI for the initial “Hero,” then use the Banana Pro editing tools for the final 10% of refinement.

- Iterative Development: (e.g., character design, product concepts). Use a hybrid approach. Rapidly iterate with Nano Banana until the silhouette is right, then “upscale” the concept into a higher-fidelity model for final textures.

Operators must remain skeptical of “one-click” solutions. The reality of generative media in 2024 and 2025 is that the best results come from a human orchestrator moving assets between different specialized engines.

Technical Guardrails and Human-in-the-Loop

A significant portion of creative operations is actually “quality control.” When using AI models at scale, teams must implement a review stage. Even a powerful model like Banana AI can produce “hallucinations”—floating limbs, nonsensical text on background signage, or physics-defying shadows.

Within the Banana Pro interface, the workflow studio is designed to facilitate this review. It allows operators to see the lineage of an asset—from the initial prompt to the final in-painted result. If a team finds that a specific prompt is consistently failing in Nano Banana Pro, they can “route up” to a more complex model or, conversely, simplify the prompt and rely more on the image-to-image tools.

We must also be honest about the learning curve. While the UI is becoming more intuitive, the “logic” of model routing isn’t something that can be fully automated yet. An operator needs a “feel” for how a specific model reacts to lighting cues versus textural cues. Nano Banana might be great at “soft morning light” but struggle with “brutalist concrete textures” compared to a model with a larger training set. This nuanced knowledge is what defines a senior AI creative operator.

Future-Proofing the Workflow

The generative AI landscape changes monthly. A workflow built exclusively around one version of one model is a workflow that will be obsolete by next quarter. The value of an integrated platform like Banana Pro AI is the ability to swap the underlying engines without changing the entire user interface or the team’s internal SOPs.

By standardizing on a canvas-based workflow, teams can stay tool-agnostic. Whether they are using Nano Banana today or an upgraded version next month, the “Stage-Gate” process remains the same:

- Ideate in a low-latency environment.

- Generate the core visual anchor.

- Refine using surgical editing tools.

- Animate using temporal diffusion if movement is required.

This structured approach treats AI as a series of specialized workstations rather than a magic box. It acknowledges the limitations—the flicker in video, the occasional anatomical error, the need for manual color grading—and builds a process that accounts for them.

Ultimately, the goal of creative ops around Nano Banana Pro is to remove the “randomness” of generative art. By mapping specific models to specific stages, teams can predict their output quality, control their budgets, and deliver assets that actually meet the technical requirements of professional media. The “pro” in the name isn’t just about the model’s parameters; it’s about the professional discipline of the person operating it.